Part 10 of 58

The Backward Signal

By Madhav Kaushish · Ages 12+

The two-layer network solved Jvelthra's fungus problem beautifully. But Trviksha had glossed over something when she said she "trained all the weights simultaneously." For the simple two-input case, she had managed it by brute trial and error, testing many weight combinations until the accuracy improved. That approach would not scale.

For a single perceptron, weight adjustment was straightforward. Drysska computed an output, compared it to the true answer, and adjusted her weights toward less error. The error was right there — the difference between prediction and reality.

For the hidden layer, the situation was fundamentally different.

The Problem

Plonkva and Grinjka did not have a "true answer" to compare against. Their outputs were intermediate — internal to the network, never observed in the real world. No one could say what Plonkva's output should have been for any given grain store, because Plonkva's output was not a prediction about grain stores. It was a feature — a transformation that only had meaning in the context of the full network.

When the final prediction was wrong, Trviksha knew Drysska's output was wrong. But she did not know whether the fault lay in Drysska's weights, in Plonkva's weights, in Grinjka's weights, or in some combination.

Trviksha: When Drysska gets the answer wrong, who is to blame?

Blortz: Perhaps everyone, in proportion.

Trviksha: In proportion to what?

Blortz: In proportion to how much each velociraptor contributed to the error. If Plonkva's output was heavily weighted in Drysska's computation — if Drysska relied on Plonkva a lot — then Plonkva bears more of the blame. If Grinjka's output barely mattered to the final answer, Grinjka bears less.

The Backward Pass

Trviksha formalised Blortz's idea into a procedure.

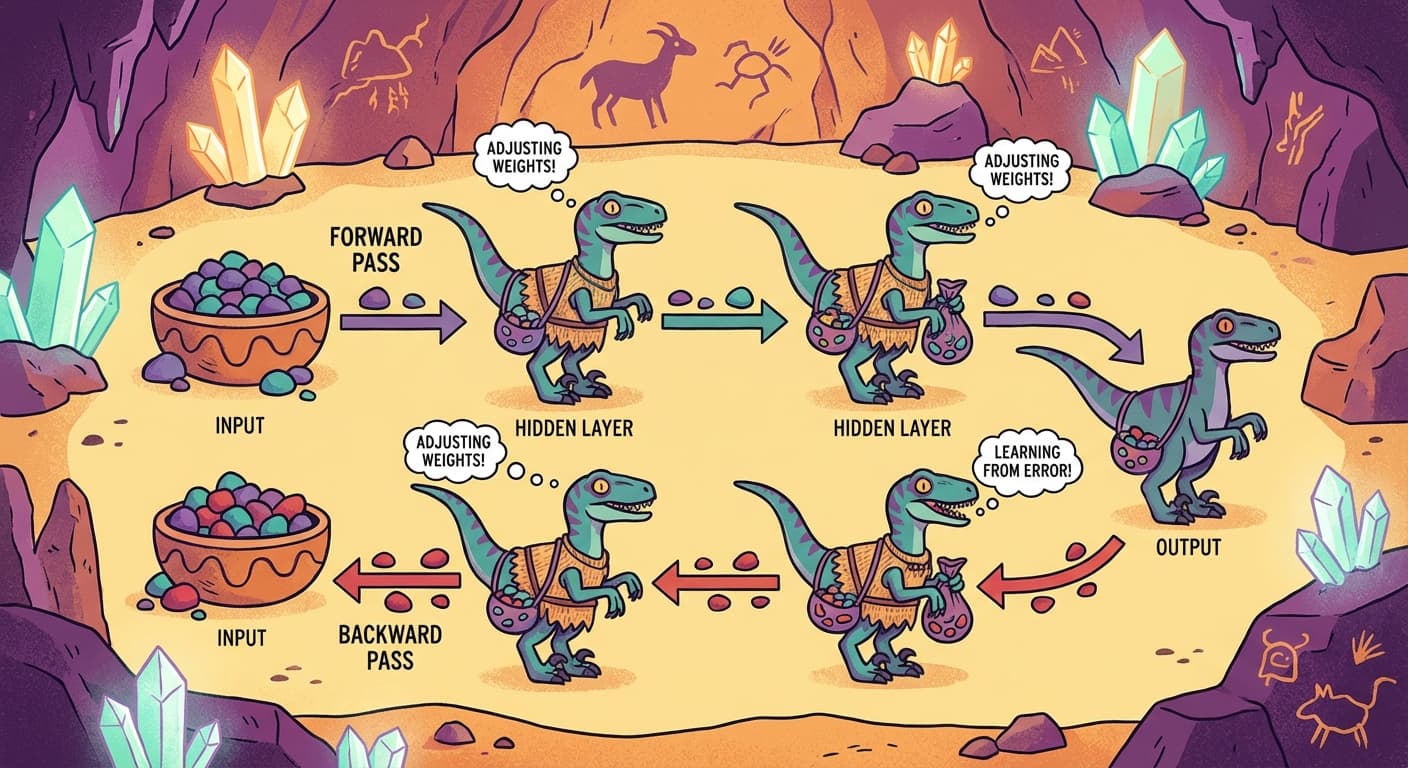

First, the forward pass: inputs flow through the network from left to right, each velociraptor computing its output and passing it to the next row. At the end, Drysska produces the final prediction.

Then, the backward pass: the error flows from right to left, each velociraptor receiving a share of the blame and adjusting its weights.

Step 1: Drysska computes the output. Compare it to the true answer. The difference is the output error.

Step 2: Drysska adjusts her own weights using the output error — exactly as before, nudging in the direction that reduces the error.

Step 3: Drysska sends a signal backward to Plonkva and Grinjka. The signal to each hidden velociraptor is: "the output error, multiplied by the weight connecting you to me." If Plonkva's output had a large weight in Drysska's sum, Plonkva receives a large error signal. If Grinjka's weight was small, Grinjka receives a small error signal.

Step 4: Plonkva and Grinjka each adjust their own weights using the error signals they received — the same adjustment rule as Drysska, but applied to the backward-propagated error instead of the direct output error.

The error flowed backward through the network. Each velociraptor adjusted its weights based on how much it had contributed to the final error, even though it never saw the final answer directly.

The Chain

The elegance of the backward pass was that it mirrored the forward pass. Every multiplication in the forward direction became a multiplication in the backward direction. If Plonkva's output was multiplied by weight W when flowing forward to Drysska, then the error at Drysska was multiplied by the same weight W when flowing backward to Plonkva.

And the principle extended to any number of layers. If there were three layers, the error would flow from the output to layer three, from layer three to layer two, from layer two to layer one. Each layer passed the error further back, scaled by its weights. The signal chained through every layer, giving every velociraptor in the network a share of the blame.

Trviksha: The rule is the same at every layer. Receive the error from the layer in front of you. Multiply by your weights. Adjust. Pass the remaining error to the layer behind you. Every velociraptor follows the same backward rule, regardless of where it sits.

Drysska: The rule is clear. But I notice something. Each time the error passes through a layer, it is multiplied by a weight. If the weights are small — less than one — the error shrinks. After many layers, the error signal might become so small that the first layer barely adjusts at all.

Trviksha: That is a problem for later. For now, two layers is enough.

Trviksha applied backward propagation to Jvelthra's twelve-factor grain store data. She built a network with twelve inputs, six hidden velociraptors, and one output. Forward pass, compute error, backward pass, adjust weights. After fifty passes through all two hundred and thirty grain stores, the accuracy reached 89%.

The network had learned. Not because anyone told the hidden layer what to compute, but because the backward signal — the chain of blame flowing from output to input — told each velociraptor how to adjust. The hidden features emerged not from design but from the pressure of errors flowing backward through the system.