Part 24 of 58

The Fading Signal

By Madhav Kaushish · Ages 12+

The recurrent network predicted one day ahead with reasonable accuracy. Vrothjelka wanted seven-day forecasts.

The Weekly Attempt

Trviksha modified the setup. Instead of processing seven days and predicting Day 8, she processed Day 1 through Day 7 and then continued running the loop for seven more steps — Day 8 through Day 14 — using the network's own predictions as inputs. At each future step, the predicted weather from the previous step fed into the loop as if it were real data.

One-day-ahead accuracy: 78%. Three-day-ahead: 64%. Seven-day-ahead: 51% — barely better than predicting the seasonal average.

Vrothjelka: Seven days out, your model is guessing.

Trviksha: The errors compound. Each day's prediction is slightly off, and that slightly-off prediction feeds into the next day. Small errors amplify through the loop.

But there was a deeper problem. Even the one-day-ahead predictions degraded when the relevant information was further back in the sequence.

The Monsoon Problem

Vrothjelka showed Trviksha a specific case. Two sequences, each thirty days long:

Sequence A: Normal conditions for twenty-nine days, then a monsoon signal on Day 30. The network, processing Day 30 with its hidden state summarising the previous twenty-nine days, correctly predicted heavy rain on Day 31.

Sequence B: A monsoon signal on Day 1, then twenty-nine days of mixed conditions. The question: given that a monsoon signal appeared on Day 1, was Day 31 more likely to have rain?

The answer was yes — early monsoon signals predicted late-season storms. But the network predicted Day 31 of Sequence B identically to a sequence with no monsoon signal at all. The Day 1 information had vanished from the hidden state by Day 31.

Trviksha: The hidden state is a fixed-size summary. Eight numbers. By Day 31, the information from Day 1 has been overwritten — compressed away as newer days added their own information. The hidden state can only hold so much.

Blortz: That is not entirely the problem. The hidden state being small does contribute. But there is a more specific issue.

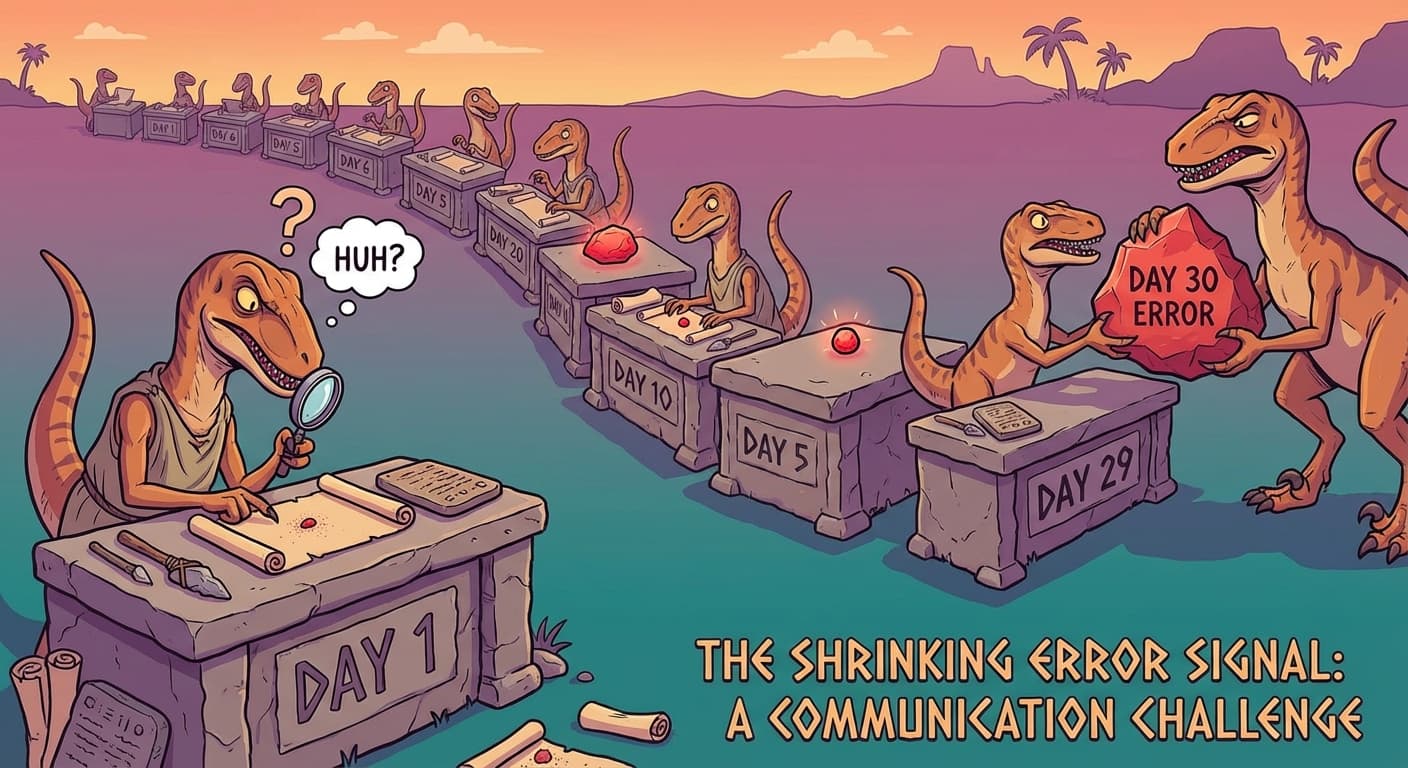

The Shrinking Error

Blortz traced the backward signal — the error flow during training. When the network processed a thirty-day sequence and made a wrong prediction on Day 31, the error signal flowed backward through time: Day 31, Day 30, Day 29... all the way back to Day 1.

At each time step, the error signal was multiplied by the weights that connected one step to the next. If those weights were less than one, the error signal shrank at each step. After thirty steps of multiplication by numbers less than one, the signal was minuscule — effectively zero.

Blortz: The error signal from Day 31 reaches Day 1 after being multiplied by the same weight thirty times. If that weight is 0.9, the signal reaching Day 1 is 0.9 to the power of 30 — about 0.04. Four percent of the original error. If the weight is 0.8, it reaches 0.001. One-tenth of one percent.

Trviksha: So the velociraptor at Day 1 barely adjusts its weights in response to an error thirty days later. It does not learn from distant consequences.

Blortz: And if the weight is greater than one, the error signal grows at each step — it explodes instead of vanishing. Neither extreme is useful.

The Fundamental Limit

Trviksha: The network cannot learn long-range dependencies. If the cause is on Day 1 and the effect is on Day 31, the connection between them is too faint for the error signal to carry. The network can only learn relationships between nearby time steps.

Vrothjelka: My data is full of long-range patterns. Monsoon onset in week one affects flooding in week four. Early dry spells in spring predict late-summer droughts. These are not day-to-day effects.

Trviksha: I know. The feedback loop gives the network memory, but the memory fades. Information degrades as it passes through repeated compressions. Important signals from early in the sequence are washed away by the time they are needed.

Glagalbagal: A velociraptor with a short memory. Useful for the immediate past, unreliable for the distant past.

Trviksha: I need a way to preserve important information across many time steps without it degrading. The hidden state changes at every step — that is the problem. What I need is something that can persist unchanged, carrying information through time without the constant compression that destroys it.

She needed, in other words, a different kind of memory — not the volatile, constantly-overwritten hidden state, but something more permanent. Something that could hold a piece of information across thirty steps as clearly as it held it at step one.