Part 40 of 58

The Safe Route

By Madhav Kaushish · Ages 12+

After several hundred flights, the pterodactyl had learned a route. It was not a good route, but it was a reliable one.

The Rut

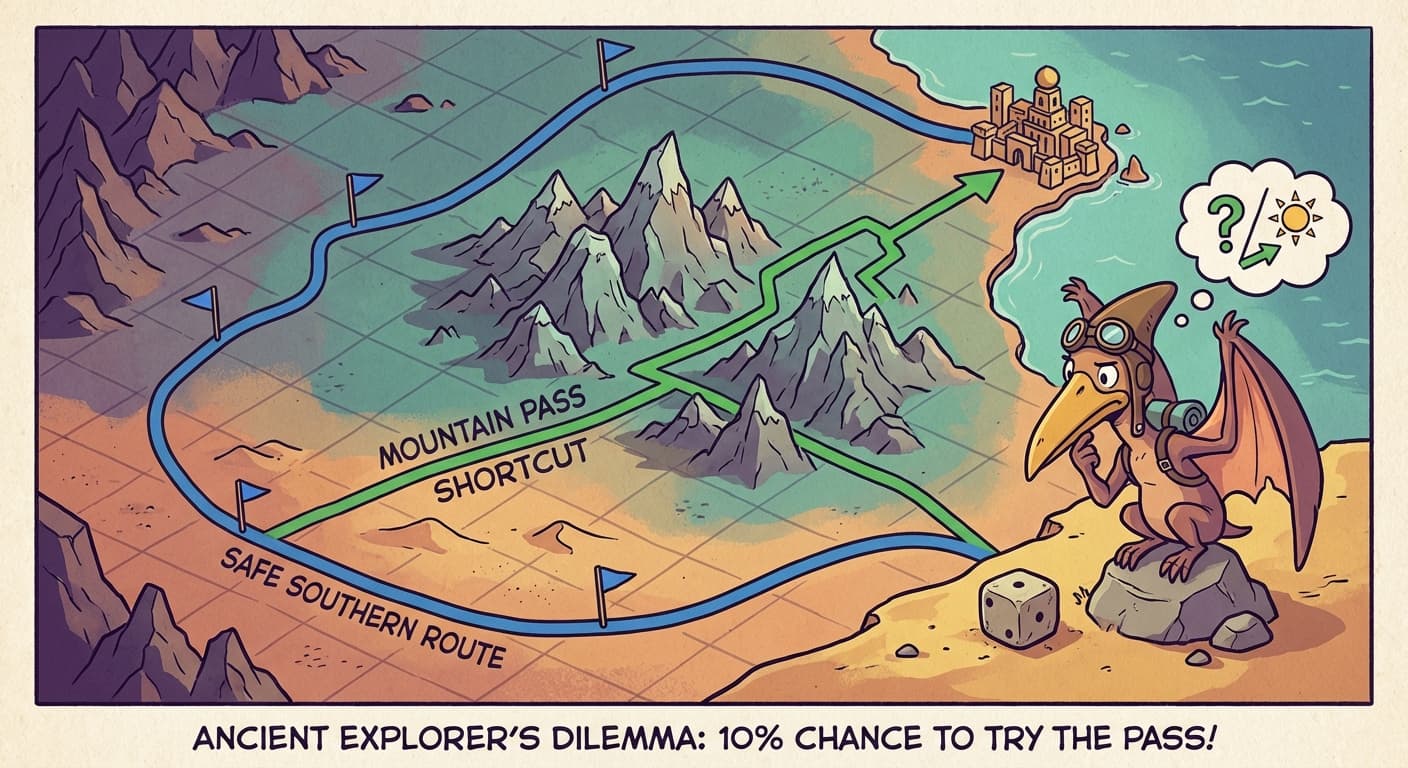

The pterodactyl had discovered that flying due south for twelve cells, then due east for eight cells, then south again for four cells reached the destination. This path was long — it took thirty-two moves, while the direct path was roughly twenty. But the southern route avoided all mountains and most storm cells. The pterodactyl had stumbled onto it early, received a positive reward, and thereafter always chose it.

Flinqva: Your pterodactyl takes the scenic route every single time. It flies south around the entire mountain range instead of through the pass, which would save fourteen moves. The pass is safe in clear weather, which is most days.

Trviksha: The pterodactyl tried the mountain pass once, early on. It happened to encounter a storm that day and received a large penalty. It has avoided the pass ever since.

Blortz: One bad experience in the pass, and it never goes back? That is not learning — that is fear.

Trviksha: It is worse than fear. The pterodactyl always takes the action with the highest estimated reward. The southern route has a positive estimated reward — it has worked every time. The mountain pass has a negative estimated reward — from that one bad flight. The pterodactyl will never discover that the pass is usually safe, because it will never try the pass again.

The Dilemma

This was the core tension. If the pterodactyl always chose the action with the highest estimated reward, it would stick with the first decent strategy it found, forever. It would never explore alternatives that might be better. It would exploit its current knowledge — and never expand it.

Trviksha: Always exploiting is safe but stagnant. The pterodactyl does what it knows works. It never discovers anything better.

Flinqva: What if you made it try random actions again?

Trviksha: If it always chose randomly, it would explore constantly but never use what it learned. It would be just as lost on flight one thousand as on flight one.

The pterodactyl needed to do both: mostly exploit its current best strategy (to get deliveries done), but occasionally explore new actions (to discover if something better existed).

The Random Nudge

Trviksha implemented a simple rule. On each move, the pterodactyl rolled a die. Ninety percent of the time, it chose the action with the highest estimated reward — exploitation. Ten percent of the time, it chose a random action — exploration.

Trviksha: Ten percent random actions. Enough to eventually try the mountain pass again. Not so much that the pterodactyl wanders constantly.

Over the next five hundred flights, the random nudge pushed the pterodactyl through the mountain pass several times. Most of those crossings were in clear weather — and successful. The pterodactyl's estimate of the pass improved. Eventually, the pass became the highest-estimated-reward action in clear weather, and the pterodactyl chose it voluntarily.

The new route — through the pass in clear weather, around the mountains in storms — took an average of twenty-two moves instead of thirty-two. A thirty percent improvement.

Flinqva: Better. But ten percent random actions means one in ten moves is still wasted on exploration.

Trviksha: I can decrease the exploration rate over time. Early on, when the pterodactyl knows little, explore more — twenty or thirty percent random. Later, when it has tried most actions in most states, explore less — five or one percent. The exploration rate should shrink as the agent's knowledge grows.

Blortz: The balance between exploring and exploiting shifts over time. A young agent should explore widely. An experienced agent should mostly exploit, with occasional exploration to adapt to changes.

Trviksha: And the terrain does change — storms shift seasonally, the marshes flood in spring, new obstacles appear. Even an experienced agent needs some exploration to stay current.

The exploration-exploitation tradeoff was not specific to pterodactyls. It applied to any agent learning from experience in a changing world. Too much exploitation meant stagnation. Too much exploration meant wasted effort. The right balance depended on how much the agent had already learned and how much the environment was changing.