Part 49 of 58

The Quadratic Wall

By Madhav Kaushish · Ages 12+

Zhrondvik was satisfied with the aligned language model. He wanted to expand its capabilities — specifically, he wanted it to process the entire legal code of Sonhlagot.

The Legal Code

The Sonhlagot legal code was enormous. Accumulated over centuries, it comprised roughly one million tokens — statutes, precedents, amendments, interpretations, and commentary. Every decision Zhrondvik made had to be consistent with this code, and currently, a team of twenty legal scholars spent their days cross-referencing relevant statutes for each policy question.

Zhrondvik: I want your model to have the entire legal code available when answering questions. Not a summary. Not selected excerpts. The entire code.

Trviksha: The transformer processes a context window — a sequence of tokens it can attend to. Currently, the window is about four thousand tokens. Your legal code is a million tokens.

Zhrondvik: Make the window bigger.

The Wall

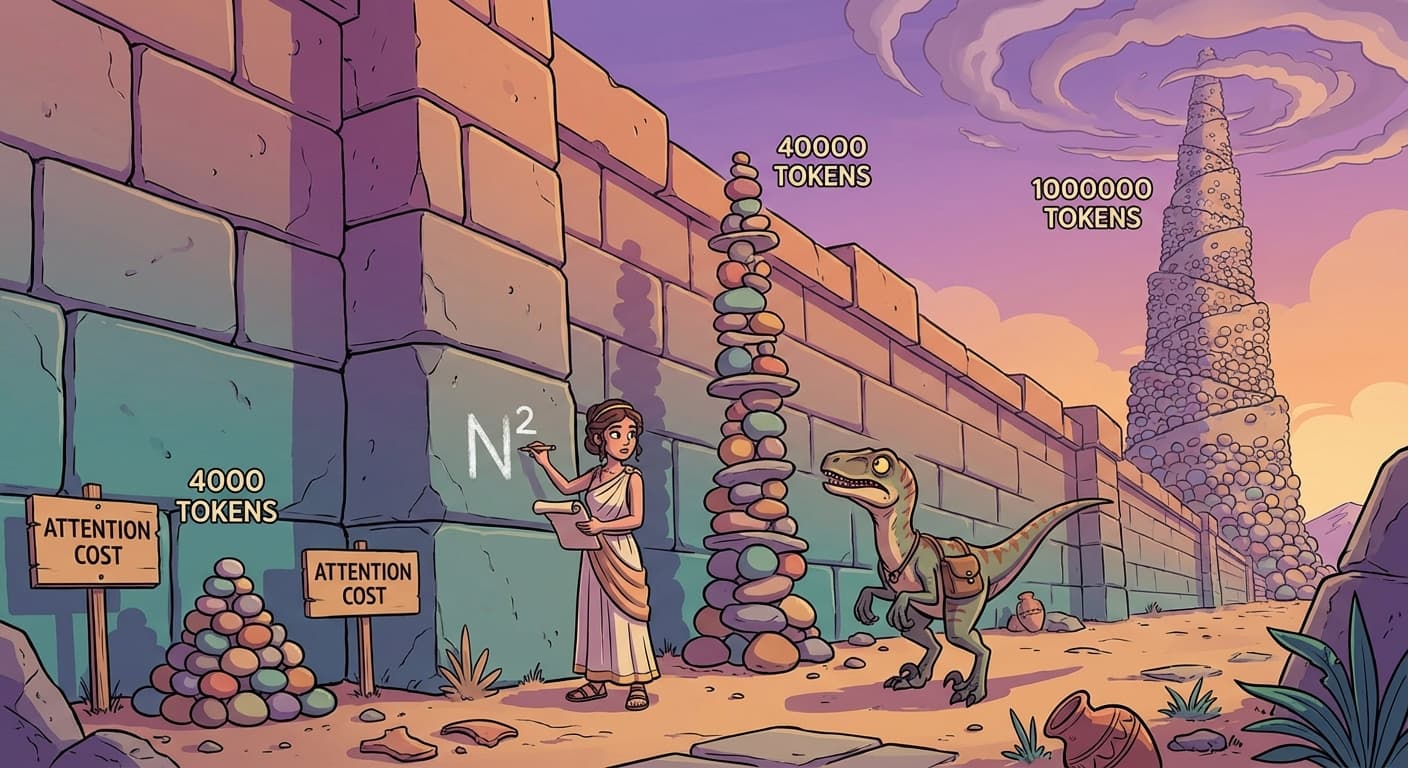

Trviksha ran the numbers. In the transformer's attention mechanism, every token attended to every other token. For a context window of N tokens, each token computed N relevance scores. Across all N tokens, that was N times N total comparisons.

| Context window | Attention comparisons |

|---|---|

| 4,000 tokens | 16 million |

| 40,000 tokens | 1.6 billion |

| 400,000 tokens | 160 billion |

| 1,000,000 tokens | 1 trillion |

Blortz: One trillion attention comparisons. Per layer. With twelve layers, that is twelve trillion. You do not have twelve trillion velociraptors.

Trviksha: I do not have twelve thousand. The quadratic cost is a wall. Doubling the context window quadruples the computation. Going from four thousand to a million tokens — a two-hundred-fifty-fold increase — multiplies the cost by sixty-two thousand five hundred.

The Compromise

Full attention — every token attending to every other token — was the ideal. It provided direct connections between any pair of positions, no matter how far apart. But for a million tokens, it was impossible.

Trviksha proposed a compromise: instead of full attention, each token would attend to only a subset of other tokens.

Local attention: Each token attended to the tokens within a window around it — say, the nearest five hundred tokens in each direction. This captured local context efficiently.

Global tokens: A small number of special positions — one every thousand tokens — attended to all other positions and were attended to by all other positions. These served as hubs that could relay information between distant parts of the document.

Trviksha: Local attention handles nearby context, which is what matters most of the time. The global tokens handle long-range connections — they are the bridges between distant sections. The cost is proportional to N times the window size, plus N times the number of global tokens. For a million tokens with a window of a thousand and a global token every thousand positions, that is roughly two billion comparisons instead of one trillion. A five-hundred-fold reduction.

Blortz: But you have lost something. A token at position 500,000 can no longer attend directly to a token at position 3. It must relay through the nearest global token.

Trviksha: Correct. The direct connection is lost. Information between distant positions must travel through the global tokens, which introduces compression — the global token summarises a region, not every individual token. For most legal queries, this is acceptable. The relevant statute and the question are usually within a few thousand tokens of each other, and the local attention handles that. For cross-references between distant sections, the global tokens provide an approximate connection.

Zhrondvik: Approximate is not acceptable for legal work. If Statute 847 contradicts Amendment 3,291 and the model misses the connection because it attended approximately rather than exactly, the legal advice is wrong.

Trviksha: For the rare cases where exact long-range connections matter, you will still need the legal scholars. The model handles the common cases — which is most of the work — and flags potential long-range issues for human review.