Part 57 of 58

The Noise Game

By Madhav Kaushish · Ages 12+

Trviksha needed to generate new layouts — not evaluate existing ones. She started with a thought experiment.

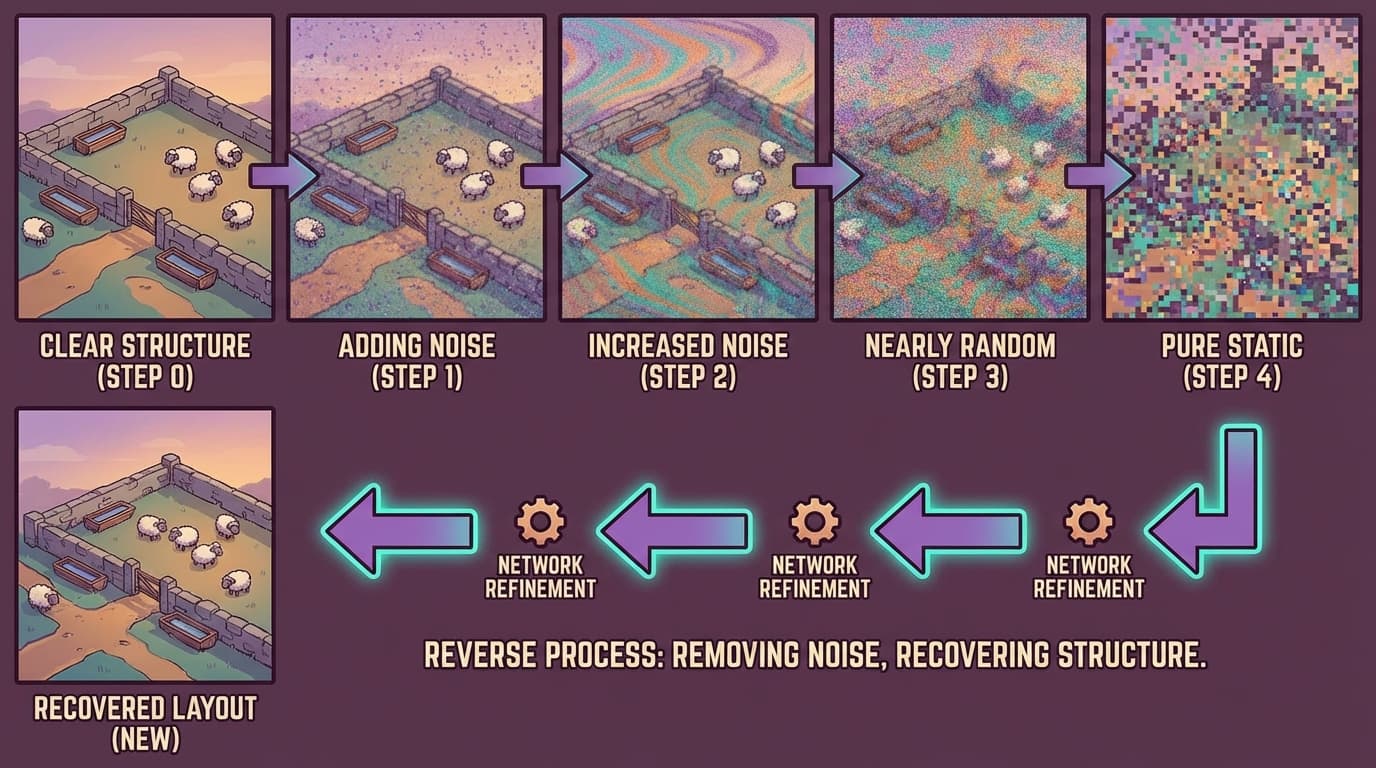

The Destruction

She took one of Kvrothja's best layouts — a productive hill-terrain design with well-placed infrastructure — and represented it as a grid of numbers. Each cell encoded the terrain type and any infrastructure present.

Then she destroyed it. Gradually.

Step 1: She added a tiny amount of random noise to every cell. The layout was still clearly recognisable — the water troughs and fences were in the same places, just with slightly fuzzy values.

Step 5: More noise. The layout was degraded — some infrastructure positions were obscured, some terrain values were distorted. But the overall structure was still visible.

Step 20: Heavy noise. The layout was barely recognisable. Rough shapes were visible, but individual features were lost.

Step 50: The layout was indistinguishable from random noise. Every trace of the original design was gone.

Trviksha: I have a process that takes a real layout and turns it into pure noise, one small step at a time. Each step adds a little randomness. After enough steps, the result is completely random — all structure destroyed.

Blortz: Why is destroying a layout useful for creating one?

Trviksha: Because if I can learn to reverse each small step — to remove a little noise and recover a little structure — then I can chain the reversals. Start from pure noise. Remove a little noise (Step 50 to Step 49). Remove more (Step 49 to Step 48). Keep going. At the end, I have a layout that looks like a real design — generated from nothing but randomness.

The Denoiser

For each small step in the destruction process, she trained a network to reverse it. Given a layout at noise level 20, the network learned to produce the layout at noise level 19 — slightly less noisy, slightly more structured.

The training data was free. She could take any real layout, add noise to produce the degraded version, and use the pair — noisy input, less-noisy target — as a training example. Thirty layouts, with fifty noise levels each, gave her fifteen hundred training pairs.

Trviksha: The denoiser does not need to create a layout from scratch. It only needs to remove a small amount of noise from an already partially structured layout. That is a much easier task.

Blortz: Each step is a small correction. Fifty small corrections, chained together, produce a large transformation — from noise to structure.

She trained the denoiser on all thirty of Kvrothja's layouts. After training, she tested the reverse process: starting from pure random noise and applying the denoiser fifty times.

The result was a new layout. It was not identical to any of Kvrothja's thirty originals — the random starting point ensured uniqueness. But it shared their structure: water troughs were placed near the base of slopes (where gravity would assist distribution), fences followed the contour lines (preventing animals from wandering off steep sections), and shelters were tucked into leeward positions (protected from wind).

Kvrothja: This is a reasonable design. Not exactly what I would have drawn, but a competent starting point. The water placement is good. The fencing follows the terrain properly. There are some details I would adjust, but the overall structure is sound.

Trviksha: The network learned the patterns of good layouts — where infrastructure tends to go relative to terrain features. When it denoises from randomness, it reconstructs those patterns. Each step removes a little chaos and adds a little structure, guided by what it learned from the training examples.

Different Starting Noise

Each time she ran the process with different random starting noise, a different layout emerged. The outputs shared the structural patterns of the training set — they all looked like plausible hill-terrain designs — but differed in the specific placements.

Trviksha: The random starting point determines which specific layout emerges. Different noise, different layout. But all layouts share the learned structure.

Glagalbagal: Creation from randomness. You start with nothing, and the network sculpts it, step by step, into something that looks like it was designed.

Trviksha: Something that looks like the training examples. The network can only generate layouts that resemble the patterns it has seen. It will not invent a fundamentally new type of layout — one that none of the training examples contain. It remixes and recombines the patterns of the training data.